-

Wow cool! Yea I think the word “downmix” was causing me to think it refereed to a situation where *I* would be needing to downmix something, that’s why I was asking. But I see now you were simply naming them that way to point out that you figured out a cool mixing technique which just happens to sound really good. If I am understanding correctly, their function and purpose are the same as the ones without “downmix” in the name, but just a different sonic result by using your techniques to achieve stereo or surround, etc. I will explore that later when I have an actual way to hear 3D monitoring somehow

-

Something else I want to say that I was pondering last night. So the prospect of being able to monitor the full spherical beauty of 3rd order ambisonics through binaural headphones is intriguing to me. It might be the closest we can get to replicating the experience of being actually there in the room standing there at the mic position listening. However that being said, that is often not the sound we want to hear or are accustomed to hearing on a recording, for films or otherwise; we are accustomed to hearing orchestras that were recorded in a room with some kind of mic array, and not usually any kind of binaural mic setup either, but rather with mic arrays that enhance stereo or enhance surround or enhance this or that thing to create actually a different sound then what would be heard if you were standing there. Similar but different. I think it’s intriguing to think about using the ambisonic output format to try to hear what may be the closest experience to actually being there, maybe; but that is probably unlikely to be the best desired sound for a recording. The various mic array options in mirpro let us get those different impressions of the room that translate well for recordings and how we are accustomed to hearing recordings, the downmix versions are just getting even a bit more sound engineering then the mic array alone, to enhance the final sonic image through mixing techniques, compliments of Dietz and his years of engineering experience, translating the mic arrays even further through the matrix. I totally get it. I will have to explore all of these later and read carefully the notes Dietz added to each one.

-

I will explore that later when I have an actual way to hear 3D monitoring somehow

Just to avoid possible misunderstandings: You don't need anything fancy for a stereo downmix of a spherical decoding from HOA. It's just that: stereo. 😊

Enjoy!

/Dietz - Vienna Symphonic Library -

[...] I totally get it. I will have to explore all of these later and read carefully the notes Dietz added to each one.

Thanks for the friendly words! You're definitely on the right track.

Just to keep things in perspective: I may know a thing or two about audio engineering and music mixing, that's true. But please keep in mind that HOA and its intricacies are themselves an area where even I don't have "many years" of experience, just a little over two. MIR 3D as a production-ready application is even younger than that. So we're all here really just scratching the surface so far.

The best is yet to come, I'm sure. 😊

/Dietz - Vienna Symphonic Library -

You don't need anything fancy for a stereo downmix of a spherical decoding from HOA. It's just that: stereo. 😊

One strange question that occurred to me this morning. So basically you're saying that the "sphere" of ambisonic information is there in the MirPro3D and we are pretty much always folding audio down to some number of outputs....could be stereo or surround or even 7.1.2 immersive... But its always being folded down..its just HOW its folded down that will differ in the virtual mic array techniques employed.

But here's the new question... While MirPro3D has encoded a 360 degree sphere of information for any XYZ point in space where the mic array is placed... When we play it back eventually on some system that supports say 5.1.2, we don't have any speakers to represent the bottom half of the sphere. We have ear level, and we have above the head level...but unless I'm missing something, we don't have anything to playback sounds below us.

How does MirPro3D translate sounds from below the mic array into playback systems, which really are only half of a sphere if you think about it? If I'm understanding that right, which I very well might not be.

I guess one could assume that it gets folded into the ear level speakers in some way, but it makes an interesting case for not putting the mic array too high in the air as that would squash a large amount of sonic information Ito the ear level rather then below the mics as it actually is. Am I making sense?

-

Very good question! This is where the so-called "coefficients" come into play, i.e. the data we we refer to and load in the lower half of the Output Format Editor. Call them "virtual speakers", if you like, which can, but don't have to relate to physical speakers.

To avoid even more complexity in an application that might be overwhelming already we decided to leave at least _that_ part of the equation to the specialists of our academic development partners at IEM Graz. They offer a fantastic, Ambisonics-focused software suite (freeware!) that contains the tool we use to create our sets of coefficients.

... explaining the whole concept is too much for a little forum positing. Please continue reading here:

-> https://plugins.iem.at/docs/allradecoder/

All of this is ongoing recent development, so we might see/hear even better "coefficients" in the not-so-distant future. :-.)

HTH,

/Dietz - Vienna Symphonic Library -

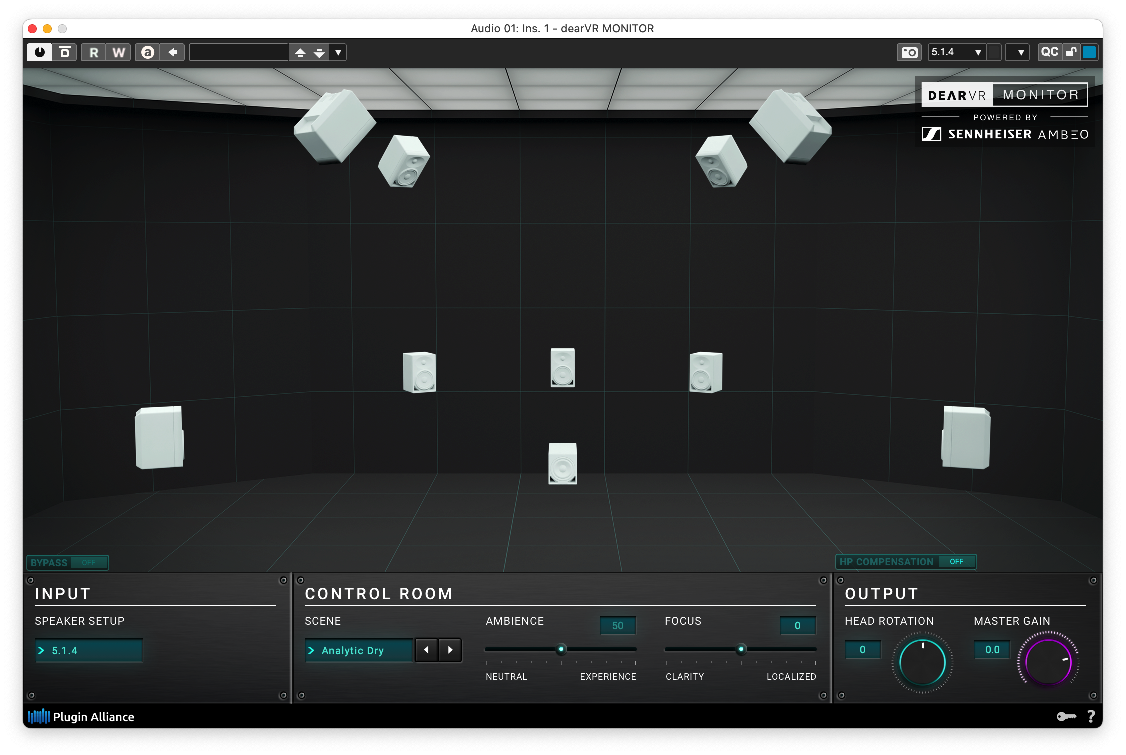

Thanks so much Dietz & Dewdman for this very instructive conversation. The fog has cleared. You have addressed the struggles I was having (in a previous thread) until I realized the Dolby-Apple Logic side of the equation was causing all the confusion in converting legacy MIR projects to the new 3D and, like Dewdman, was anxious to “hear” the new MIR itself – without having to assign near, mid & far in the Atmos plugin…I have no clue what that would do to the MIR audio. The coming tutorial videos will no doubt make things even clearer. But for now, I have opted for the Dear VR Monitor solution which seems to be able to render MIR Prod 3D’s environment as intended. Thanks again for your thoroughness.

PS. And Deadman, I have appreciated & well used your VSL AU3 Logic templates. Many thanks.

-

But for now, I have opted for the Dear VR Monitor solution which seems to be able to render MIR Prod 3D’s environment as intended. Thanks again for your thoroughness.

Oh that is interesting. dearVR is handling binaural encoding differently then Dolby? That kind of makes sense though...so something for me to investigate further.

I was just reading an article yesterday about how Apple's Binaural encoder is different from Dolby's.. Apple uses theirs for their so called "Spatial Audio" feature on Apple Music. A lot of people had problems though because Atmos productions did not translate well after having been produced and presumably monitored using Atmos system...or perhaps Dolby's monitoring...then on Apple Music as so called "Spatial audio" it would sound totally wrong...in particular it seems to do with how center channel is handled and Apple's version tends to make everything wider then life.....or something to that extent. In any case, last year Apple added actual Dolby Atmos as also being playable from AppleMusic now...perhaps because of these binaural compatibility problems.

So yea I'm curious how dearVR's binaural would differ from Dolby's.

Apple's also does not have near, mid far stuff. But when I have listened to some test binaural recordings, everything sounded like it was just barely outside my skull. I could hear things moving around in front and around the sides and the back of my head..just barely. I think Dolby is adding, perhaps, some processing to get things to sound further away from our head. But then, that is what MirPro3D is supposed to do...so I guess in LogicPro you would want to monitor MirPro3D with the Dolby renderer, and make sure to set the speakers to OFF (not near, mid or far). That way it won't be interjecting any more distance related processing that might interfere with what MirPro3D is doing.

Well I'm the kind of person that when I listen to headphones it generally sounds like its inside my head....so having something put the sounds outside my head is a welcome improvement, but its not the same as listening to loudspeakers that are surrounding you

-

Very good question! This is where the so-called "coefficients" come into play, i.e. the data we we refer to and load in the lower half of the Output Format Editor. Call them "virtual speakers", if you like, which can, but don't have to relate to physical speakers.

That is very interesting. Imaginary loudspeakers eh. Is there any way I can view the actual JSON for the factory presets that are using custom coefficients? Are they found anywhere after install or just buried inside the MirPro3D binary?

So another thought/question I have is that in a real world situation, SOME of the sound coming "from below", would make it into the mics in a real recording scenario. They wouldn't be spatially represented during playback as being from below; in a playback system that only has speakers at or above ear level...but still some of that reflected sound off the ground, etc..would go into the mics and be present in any kind of recording. yea?

I notice that the factory preset output formats only include virtual loudspeakers (and their coefficient parameters), for the 3D presets. The other presets don't seem to have them for some reason. Why is that? If nothing else, don't they also need to handle the "below head level" sounds in some way?

I also noticed in the iem.at docs a comment about 1st vs 3rd order ambisonics...and they made a comment that 1st order are smoother panning, while 3rd order are bumpier panning. Which might explain what the "Ambisonics Order Blend" control is for? There are only a couple of the factory presets that actually have that control set to anything other than 100% first order. So I'm curious what the implications of that are, and when or why some of the presets have sometimes nudged it halfway towards 3rd order ambisonics?

-

You see the used coefficients in the left part of the lower half of the Output Format Editor. The list is a bit ugly (... waiting for a GUI update ;-) ...), but you see the speaker index and its coordinates (angles, actually).

Yes, an ideal Ambisonics reproduction system would be spherical, too, with the listener in the center. This is not easy to get in Real Life, that's why this genius IEM AllRADecoder offers so-called "imaginary speakers". They aren't visible in MIR 3D and serve no direct reproduction tasks, but they allow for balancing the geometry (and thus the energy dispersion) within an "imperfect" sphere. ... this topic is not my forte, I have to learn a lot myself in this field.

Re: Capsules in 3rd Order: Just try it yourself. Push the blender towards 100% 3rd order and watch the shape of a beautiful Fig-8 capsule derail completely. ;-D ... this is what the guys at IEM describe as "bumpy". There is no real-world equivalent for these shapes, and they are quite hard to handle. The Blender (invented by VSL's chief software developer, Martin Saleteg, IIRC) tries to offer workarounds, so we can have it all: Better imaging, embracing space _and_ smooth panning.

/Dietz - Vienna Symphonic Library -

Re: Capsules in 3rd Order: Just try it yourself. Push the blender towards 100% 3rd order and watch the shape of a beautiful Fig-8 capsule derail completely. ;-D ... this is what the guys at IEM describe as "bumpy". There is no real-world equivalent for these shapes, and they are quite hard to handle. The Blender (invented by VSL's chief software developer, Martin Saleteg, IIRC) tries to offer workarounds, so we can have it all: Better imaging, embracing space _and_ smooth panning.

I guess 1st order is not as precise..the big bubble is somewhat ambiguous about the precise imaging of any given sound within that area...while 3rd order is more precise.....but 3rd order has some dead spaces... So its only more precise for capturing the sound that is not in a dead spot. The 1st order will not have so many dead spots...but then you lose exact spatialization... something like that. Obviously 7th order would hypothetically have even more precision and perhaps less dead spots, but would be unusable on my 2010 MacPro. The blender lets us get a little in-between in some way, but when I drag it around I see the 3D photo distort in ways that don't make any sense to me to understand what it might be doing to the sound. hehe.

Well Aside from tweaking around with it and listening, I don't think I would be able to consciously do anything with that blender control or even the coefficients, to make my own user presets... Maybe someday. For now I will stick to using Factory presets...once I figure out which ones are the ones I prefer.

-

But for now, I have opted for the Dear VR Monitor solution which seems to be able to render MIR Prod 3D’s environment as intended. Thanks again for your thoroughness.

I finally got some binaural working with Logic Pro and MirPro3D. First thing I want to say is WOW! The Binaural rendition most definitely improves the depth perception and imaging as I move a piano around the venue... When I put the piano at the hot spot at the back of the hall...it definitely sounds like its in the back!!! Bravo VSL, this sounds amazing.

Regarding which Binaural encoder to use. I just downloaded and tried out the dearVRmonitor from Plugin Alliance, currently on sale for $150. I will probably end up buying this.

The built in binaural encoders in LogicPro are Apple's and Dolby's.

The Apple one is optimized to represent their AppleMusic Spatial Audio format. I personally don't see a point of using that at all for any reason now that AppleMusic support Dolby Atmos.

The Dolby Atmos one sounds better to me and has the ability to add some room acoustics in the NEAR, MID, FAR category. More on that in a minute.

But I definitely got better monitoring sound out of dearVRmonitor then Dolby's. Partly that could be because of the ability to choose my headphone profile, partly it could be due to dearVR's clarity and ambience sliders which can dial in something like Dolby's NEAR, MID, FAR. Also I really like that it has various room profiles in order to compare a mix in different virtual listening environments.

That being said, it starts to become a bit of a rabbit hole about what listening environment you would want to use while mixing for distribution. Is it going to be listened to mainly on AppleMusic with AirPods? Is it going to be mainly listened to on home theater Atmos systems? Or will it be at a big live venue, etc. I'm not sure right now whether it would be preferable to use Dolby's renderer, if you are going to distribute as Dolby Atmos...because its close to what you're actually going to hear...but maybe its not, dearVR is providing the headphone modeling which may actually be truer in that regard. Also its not clear what Dolby's NEAR, MID, FAR exactly do. But this is what every mix engineer faces every day, trying to make a mix that will translate to many playback systems and work reasonably well on all of them that matter. DearVRmonitor definitely brings more options for trying different listening environments...and as well I was easily able to get a very nice sounding binaural monitoring environment....which may or may not translate to the best final mixes...but sure sounds good on my headphones while I'm here at home playing around... So dearVRmonitor is definitely on my list now. I'm also inspired to upgrade my studio to actual 5.1 monitoring at some point, but that is a few thousand dollars away, so dearVRmonitor will be it for the short term, I'm impressed.

And I'm extremely impressed by the 3D imaging I hear through binaural headphones with MirPro3D. If I had known how good dearVRmonitor would improve that I probably would have gotten it a long time ago to use the old MirPro in 2D! It really makes MirPro imaging much more clear to hear. How all of that translates to stereo mix downs...I'm not 100% sure...but I can easily explore those differences by using a tool like dearVRmonitor and its just kind of enjoyable to hobby at home with VSL libraries using this.

that being said, all of the encoders I used hit the CPU quite hard for any time I was recording a live track. Even with some significant latency and larger buffer. So its probably mainly suitable for mix down only IMHO.

-

WOW indeed! When you get that binaural working MIR Pro 3D nearly overwhelms you with its clarity and precision. I see why (maybe that should be, I hear why) the folks at Vienna use the dear vr monitor. And I agree, wish I'd been using for some time. Those cathedral settings are simply awe inspiring. Well, can't say enough. But you are right, when it comes to sharing or delivery...can't imagine in this world we'd have a universal standard. But for now, hearing is believing.

-

Those cathedral settings are simply awe inspiring.

For actual mixing work I strongly suggest to stick to the scene "Analytic Dry" (or "Analytic Position), though, with Ambience and Focus left untouched at default center position. 😊

/Dietz - Vienna Symphonic Library -

This could be your 9.1 speaker system (see below).

https://www.vsl.co.at/community/resource.ashx?a=5131

It's a bit like watching movies at home, not in the cinema. Not the real thing, but not bad either. ;-)

... of course there are quite a few comparable solutions, some of them come directly as built-in tools of modern DAWs.

/Dietz - Vienna Symphonic Library

Forum Statistics

194,617 users have contributed to 42,925 threads and 257,982 posts.

In the past 24 hours, we have 3 new thread(s), 9 new post(s) and 123 new user(s).